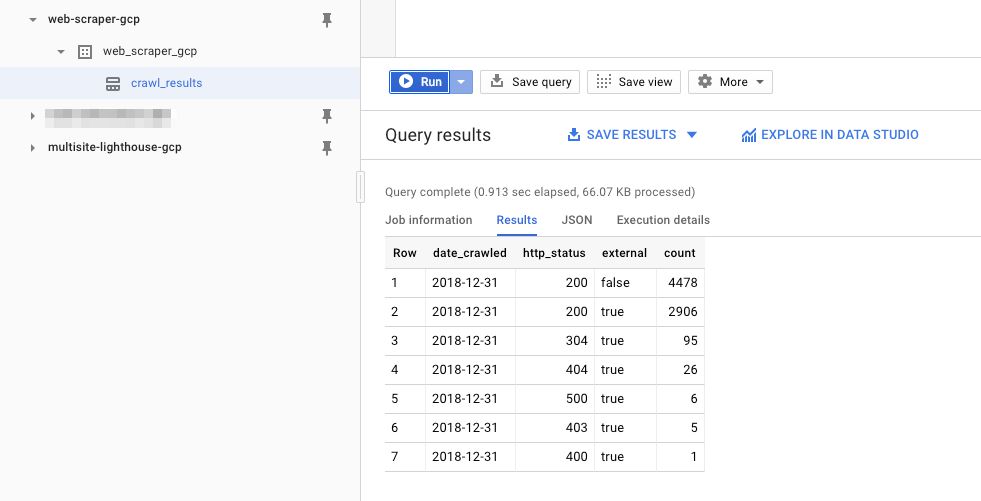

Ever since it was released that server-side tagging in Google Tag Manager would run on the Google Cloud Platform stack, my imagination has been running wild. By running on GCP, the potential for integrations with other GCP components is limitless. The output to Cloud Logging …